AI Integration Frameworks: Comparison

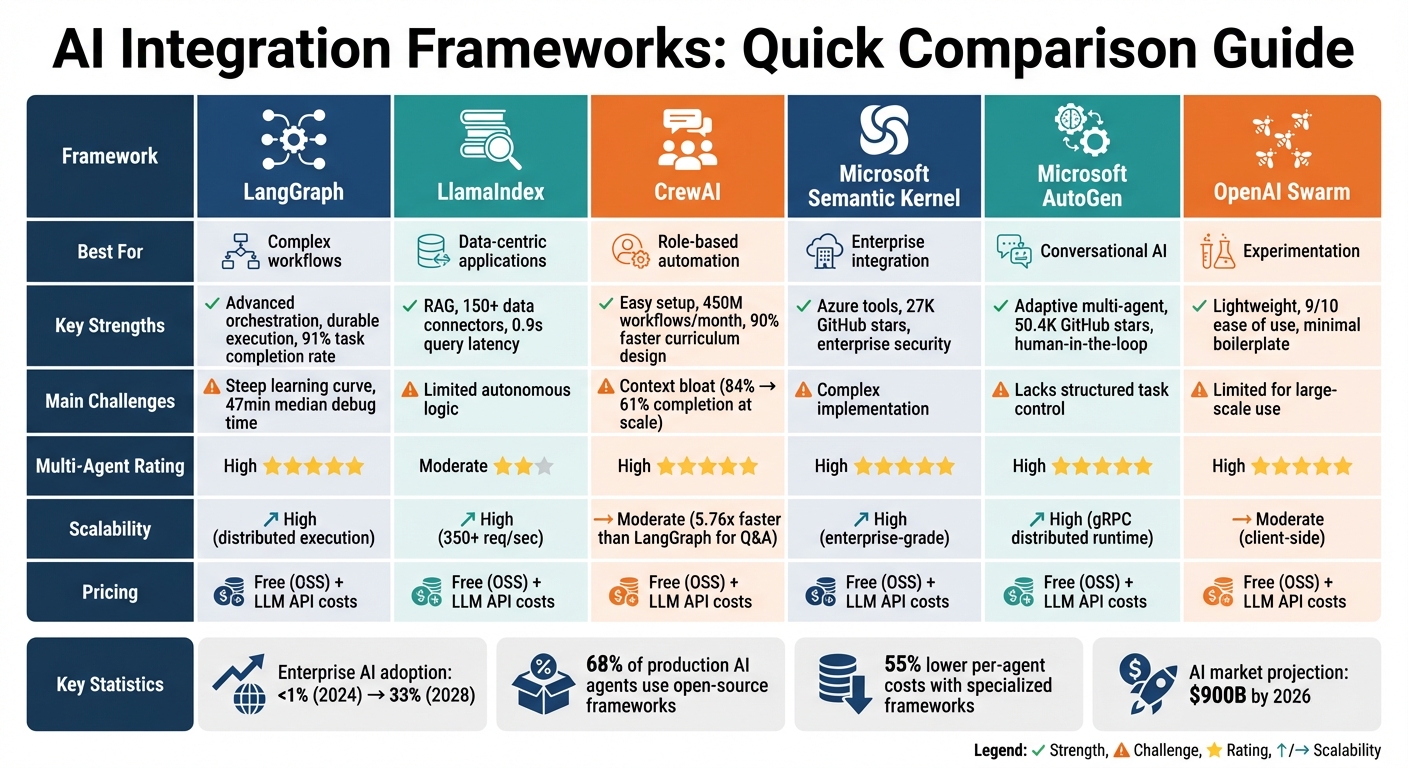

AI integration frameworks are essential for managing autonomous AI workflows, coordinating agents, and enabling tools for large language models (LLMs). With enterprise applications increasingly adopting AI agents - projected to grow from under 1% in 2024 to over 33% by 2028 - selecting the right framework is critical. Here's a quick breakdown of the six major frameworks covered:

- LangGraph: Best for complex workflows with graph-based orchestration and multi-agent coordination.

- LlamaIndex: Focuses on connecting LLMs to data with retrieval-augmented generation (RAG).

- CrewAI: Mimics teamwork dynamics for role-based automation and rapid prototyping.

- Microsoft Semantic Kernel: Designed for enterprise integration with plugins and Azure compatibility.

- Microsoft AutoGen: Excels in conversational, multi-agent systems with human-in-the-loop options.

- OpenAI Swarm: Lightweight framework for experimentation and small-scale projects.

Quick Comparison

| Framework | Best For | Strengths | Challenges | Pricing |

|---|---|---|---|---|

| LangGraph | Complex workflows | Advanced orchestration, durable execution | Steep learning curve | Free (OSS) |

| LlamaIndex | Data-centric applications | RAG, strong data connectivity | Limited autonomous logic | Free (OSS) |

| CrewAI | Role-based automation | Easy setup, multi-agent focus | Context limitations | Free (OSS) |

| Semantic Kernel | Enterprise integration | Azure tools, scalability | Complex to implement | Free (OSS) |

| AutoGen | Conversational AI | Adaptive multi-agent systems | Lacks structured task control | Free (OSS) |

| OpenAI Swarm | Experimentation | Lightweight, easy to use | Limited for large-scale use | Free (OSS) |

Each framework has unique strengths. LangGraph is ideal for intricate workflows, while LlamaIndex is perfect for data-heavy tasks. CrewAI simplifies agent collaboration, and Microsoft frameworks (Semantic Kernel and AutoGen) cater to enterprise needs. OpenAI Swarm is great for small-scale projects or prototyping. Choose based on your project's complexity, scalability, and integration needs.

AI Integration Frameworks Comparison: Features, Strengths, and Use Cases

I Tested Every AI Agent Framework - Here’s What No One Tells You (Full Build & Benchmark)

sbb-itb-58f115e

1. LangGraph

LangGraph organizes workflows into graphs, where nodes represent functions and edges signify transitions. This setup handles complex, non-linear workflows, including loops like Plan-Execute-Reflect and hierarchical structures where manager agents oversee sub-agents. By late 2025, LangGraph was in use by 600 to 800 companies - including LinkedIn, Uber, and Klarna - for advanced orchestration tasks. Its design lays the groundwork for effective multi-agent coordination, which we'll cover next.

Multi-Agent Capabilities

LangGraph excels at enabling multiple agents to work together smoothly by managing state transitions. For example, in coding workflows, one agent can write code while another tests it until the code meets validation standards. With the addition of verification nodes, LangGraph achieved a 91% task completion rate in sequential tool-use benchmarks - the highest among major frameworks. Norwegian Cruise Line uses LangGraph to power guest-facing AI solutions requiring coordinated actions across various service areas.

Persistence

LangGraph includes versioned state management with checkpointing, allowing agents to save their progress and even "Time Travel" to replay any point in their history. It also supports durable execution, meaning workflows can resume after failures or extended idle periods. For production use, a durable checkpoint store like Postgres or Redis is required to avoid losing in-progress states during crashes.

"LangGraph gives you the most control over complex branching workflows... but the learning curve is steep and front-loaded." - Kai Renner, Senior AI/ML Engineering Leader

Integration Ease

As part of the LangChain ecosystem, LangGraph taps into over 2,000 integrations with vector databases and SaaS APIs. Teams can adopt it gradually by converting specific linear workflows into graph nodes. However, understanding graph-based state management is essential, which adds to the learning curve. Even so, LangGraph reduces state management overhead by 65% compared to manual TypedDict implementations.

Scalability

LangGraph is designed for scalability, supporting distributed graph execution, parallel node processing, and sharding to handle workloads across clusters. It performs well with long-running processes, offering horizontal scaling and task queues. For enterprise deployments, pairing LangGraph with LangSmith is highly recommended. LangSmith’s observability tools, including "Time Travel" debugging, make it easier to diagnose errors or infinite loops.

Pricing

LangGraph’s core framework is open source under MIT/Apache 2.0 licenses. For managed hosting, LangGraph Cloud costs $0.003/minute, with enterprise plans offering dedicated clusters. LangSmith observability is free for up to 5,000 traces per month, with a Plus tier available at $39 per seat per month, plus additional usage fees.

2. LlamaIndex

LlamaIndex takes a different approach compared to graph-based orchestration by prioritizing precise data retrieval to simplify interactions with large language models (LLMs). Its primary goal is to connect LLMs directly to your data. Instead of constructing intricate workflow graphs, this framework focuses on retrieval-augmented generation (RAG) and data indexing. With over 30,000 GitHub stars and a RAGAS score of 0.81 - outperforming the 0.72 score of general-purpose frameworks - LlamaIndex is trusted by companies like DataCorp. These organizations use it to break down user queries and route them to the right data sources, building a foundation for advanced multi-agent workflows and seamless integration.

Multi-Agent Capabilities

Initially designed as a RAG tool, LlamaIndex has evolved to support multi-agent workflows through its Workflow module. It offers pre-built agents like FunctionAgent and ReActAgent, which manage sequential tool usage and asynchronous callbacks. The AgentWorkflow abstraction allows specialized agents, such as ResearchAgent and WriteAgent, to share information through an initial_state dictionary and delegate tasks effectively. This makes LlamaIndex a strong choice for agent-driven document processing, extending its functionality beyond simple Q&A tasks.

Persistence

LlamaIndex primarily stores data in memory but includes options for saving indices to disk using storage_context.persist() (e.g., saving to ./storage). For production environments, it supports remote backends via fsspec for cloud storage like S3 and databases like MongoDB that offer automatic persistence. When deploying workflows through WorkflowServer, you can choose between SqliteWorkflowStore for local persistence or DBOSRuntime (Postgres-backed) for managing distributed systems at scale. Additionally, Redis snapshots can be used to save workflow states during critical moments, enabling recovery from crashes and resuming processes from the last saved point.

Integration Ease

LlamaIndex stands out for its integration capabilities, thanks to LlamaHub, which provides over 150 data connectors for platforms like Notion, Google Drive, Slack, databases, and PDFs. Its modular design allows easy swapping of parsers, loaders, embedders, and vector databases without the need for extensive code changes. You can also integrate LlamaIndex query engines into LangChain agents, combining its robust retrieval capabilities with advanced orchestration. For structured outputs, it works seamlessly with Microsoft Guidance and Pydantic, ensuring smooth data flow into enterprise systems. With an average query latency of 0.9 seconds, it outpaces general-purpose frameworks, which average 1.2 seconds.

"LlamaIndex's query engines handle the complexity of breaking down user questions and routing them to the right data sources automatically, which saves weeks of development time." - Dr. Sarah Chen, AI Research Lead, DataCorp

Scalability

LlamaIndex supports incremental indexing, allowing new documents to be added without rebuilding the entire index - a feature that significantly reduces compute costs for expanding knowledge bases. It achieves a context window utilization rate of 78%, compared to 65% for competitors, resulting in lower API expenses for high-volume operations. The framework can process 350+ requests per second when paired with enterprise gateways. For organizations with complex document parsing needs, LlamaCloud provides managed infrastructure capable of handling formats like PDFs with embedded tables and charts.

Pricing

The core LlamaIndex framework is open source under the MIT License, making it free to use. For additional capabilities, LlamaCloud offers a free tier supporting up to 1,000 pages per month. The Pro plan, priced at $250 per month, includes support for 100,000 pages, while custom enterprise pricing is available for larger-scale needs. Organizations requiring dedicated help can also access enterprise support through tailored consulting agreements.

3. CrewAI

CrewAI sets itself apart by mimicking the dynamics of human teamwork. Instead of relying on intricate workflow graphs, it introduces "Crews" - teams of specialized AI agents that collaborate autonomously to achieve specific tasks. As of March 2026, this framework handles over 450 million workflows monthly and has certified more than 100,000 developers. A standout example is PwC, which boosted its code-generation accuracy from 10% to 70% after adopting CrewAI workflows.

Multi-Agent Capabilities

One of CrewAI's key strengths lies in its ability to manage multiple agents effectively. It differentiates between Crews (focused on communication and task delegation among agents) and Flows (which handle stateful, event-driven application logic). The framework supports both sequential execution and hierarchical orchestration, where a "manager" agent oversees the activities of others. Its planning feature automatically generates a step-by-step roadmap before execution, ensuring agents are aligned on the task structure.

In March 2026, Chris Giordano, General Assembly's Director of Learning and Program Development, highlighted a 90% reduction in curriculum design time thanks to CrewAI's multi-agent system, which helped generate lesson content and instructor guides. The framework also excels at maintaining reliable state tracking across interactions, making it a robust choice for complex business automation workflows.

Persistence

CrewAI's Flows include built-in state management, which tracks progress and maintains context throughout agent interactions. This is particularly useful for production applications. Its Agent Management Platform (AMP) offers centralized management with serverless scaling and persistent storage. For instance, DocuSign reported a 75% faster first-contact time in March 2026 by using CrewAI agents to extract and consolidate lead data from multiple internal systems.

In addition to state management, CrewAI simplifies enterprise system integration through its declarative API.

Integration Ease

Connecting CrewAI to enterprise tools is straightforward, thanks to its declarative API. It seamlessly integrates with platforms like Gmail, Slack, Jira, and Salesforce via OAuth. The crewai-tools package and Merge Agent Handler Tool provide access to hundreds of third-party services through a unified API. For non-OpenAI models, setting memory=False prevents authentication errors. The command-line interface (CLI) further simplifies setup with commands like crewai create crew and crewai create flow, offering pre-built project scaffolding for rapid prototyping.

"CrewAI's declarative API makes it significantly faster to prototype multi-agent systems than LangChain or AutoGen." - Conbersa

Scalability

CrewAI is designed for speed and efficiency, making it a strong choice for time-sensitive workloads. Benchmarks show it can be up to 5.76x faster than LangGraph for certain question-answering tasks. However, performance may decline beyond eight sequential steps due to cumulative context limitations. For larger-scale deployments, the CrewAI AMP provides additional tools like visual studio integration, centralized management, and round-the-clock support.

Pricing

The base CrewAI framework is open-source and free under the MIT License. For enterprises, the CrewAI AMP offers a paid option featuring centralized management and 24/7 support. Enterprise integrations require an active AMP subscription and a compatible cloud billing setup.

With its focus on agile multi-agent orchestration, CrewAI stands out as a compelling solution for businesses seeking efficient and scalable AI frameworks.

4. Microsoft Semantic Kernel

Microsoft Semantic Kernel connects large language models (LLMs) with enterprise systems by linking natural language prompts to business logic in C#, Python, or Java through a plugin-based architecture. By early 2026, the framework had gained over 27,000 stars on GitHub and reached 2.6 million downloads by February 2025, a significant jump from 1 million in April 2024.

Multi-Agent Capabilities

Semantic Kernel uses a planner to create sequences of functions and plugins aimed at achieving specific goals. However, developers need to invest more effort to fine-tune multi-agent behaviors. The framework includes built-in telemetry through OpenTelemetry, simplifying the debugging of complex agent interactions in production. Microsoft is also integrating features from AutoGen into Semantic Kernel to enhance its multi-agent capabilities, ensuring tighter alignment with enterprise needs.

"Semantic Kernel is production-ready with built-in features that are off by default but available when needed. One such feature is observability." - Tao Chen, Microsoft

Integration Ease

Semantic Kernel is designed to seamlessly integrate AI into existing enterprise systems. Its plugin system transforms business logic into AI-accessible tools, enabling LLMs to decide which functions to run. The framework works closely with Azure services, including Azure AI Foundry for tracing and Azure Functions for serverless deployment. It also supports enterprise-grade patterns like dependency injection, making it especially appealing for C# developers in the .NET ecosystem. Despite its Azure alignment, Semantic Kernel is model-agnostic and can work with OpenAI, Hugging Face, or various local models.

Scalability

For large-scale deployments, Semantic Kernel offers persistent state management through the IStorage interface, supporting databases like Azure Cosmos DB and Azure Blob Storage. Its modular plugin system allows teams to scale AI functions across multiple projects efficiently. Security is a key focus, with features such as prompt injection detection, content filtering via Azure AI, and detailed audit logging for compliance purposes. Strong typing and dependency injection further simplify the management and testing of extensive codebases.

Pricing

Semantic Kernel is available for free under the MIT license. However, any LLM API usage and Azure service integrations are billed according to standard Azure pricing.

5. Microsoft AutoGen

Microsoft AutoGen uses a conversational method to enable AI agents to work together through automated chats, tackling tasks collaboratively. By early 2026, the framework had gained significant recognition, with over 50,400 GitHub stars and 559 contributors. Between 2024 and 2025, Novo Nordisk leveraged AutoGen to create a production-ready multi-agent system, helping their scientific teams extract insights from complex data. In 2025, a B2B SaaS company used AutoGen to automate competitive intelligence, slashing report generation time from 3.5 hours to just 18 minutes while reclaiming 11 of the 12 hours analysts spent weekly on these tasks.

Multi-Agent Capabilities

With the v0.4 update, AutoGen introduced an asynchronous, event-driven architecture based on the actor model. This allows agents to coordinate through messaging with various patterns, such as sequential, concurrent, group, and dynamic handoff orchestration. The "Magentic" coordination feature enables a manager agent to oversee task distribution. For high-stakes business operations, the UserProxyAgent provides human-in-the-loop functionality, allowing users to give feedback or approvals before agents proceed.

"Capabilities like AutoGen are poised to fundamentally transform and extend what large language models are capable of. This is one of the most exciting developments I have seen in AI recently." - Doug Burger, Technical Fellow, Microsoft

Persistence

Version 0.4 introduced task progress saving and restoration, which ensures that agents can pause and later resume tasks exactly where they left off. This feature is especially useful for workflows that need to survive system restarts or wait for external triggers, such as human approvals or data updates, before continuing.

Integration Ease

AutoGen Studio simplifies development with a low-code interface, enabling teams with minimal coding expertise to prototype quickly. It comes with built-in connectors for PostgreSQL and Microsoft SQL Server, allowing immediate access to business data. Cross-language support between Python and .NET ensures that developers can work in their preferred languages while agents communicate effortlessly. For monitoring and debugging, AutoGen includes OpenTelemetry support, offering industry-standard tools for tracing agent interactions.

Scalability

The framework's gRPC-based distributed runtime enables agents to operate across multiple machines, making it suitable for high-throughput systems. AutoGen's layered architecture - comprising Core, AgentChat, and Extensions - supports scaling for large agent networks. To manage costs effectively, users can set strict max_consecutive_auto_reply limits to prevent agents from entering infinite loops that drain API tokens. Cost efficiency can also be improved by pairing simpler tasks with cheaper models like GPT-4o-mini, while reserving GPT-4o vs GPT-4 for more complex reasoning, reducing expenses by up to 65%. Additionally, setting the LLM temperature to 0 ensures consistent behavior in production environments. These scalability features and cost-saving measures highlight AutoGen's appeal for large-scale deployments.

Pricing

AutoGen is free and open-source, licensed under MIT. Costs are limited to LLM API usage and hosting infrastructure. Microsoft plans to merge AutoGen with Semantic Kernel into a unified Microsoft Agent Framework, which is expected to launch in Q1 2026. This release will include enterprise-ready features like role-based access control and compliance certifications.

6. OpenAI Swarm

OpenAI Swarm is built around a streamlined approach to coordinating multiple agents, relying on two main concepts: agents (which handle instructions and tools) and handoffs (explicit transfers of control). As of 2026, OpenAI positions Swarm as an experimental framework aimed at learning and exploration, rather than a production-ready tool. For enterprise use, the OpenAI Agents SDK is the recommended option. In late 2024, BotDojo used Swarm to develop an airline customer service system, featuring a Triage Agent that directed requests to specialized agents handling tasks like flight changes, cancellations, and lost baggage. Their evaluation of GPT-4o, Claude 3.5, and Llama 3.1 revealed that GPT-4o achieved the best accuracy in calling the appropriate tools.

Multi-Agent Capabilities

Swarm enables agents to collaborate using a handoff mechanism, where one agent can transfer control to another by calling a tool that returns an Agent object. During these transitions, the framework maintains a shared conversation history, ensuring continuity. Instead of relying on formal workflow engines, Swarm uses predefined natural language routines to guide agent behavior. This client-side orchestration approach provides developers with full visibility into the process, including message exchanges and tool executions.

Swarm has been rated 7/10 for autonomy, 9/10 for ease of use, and 8/10 for flexibility. Its reliance on natural language routines and lightweight orchestration sets it apart from more structured frameworks, offering a distinct balance between simplicity and adaptability.

"Swarm focuses on making agent coordination and execution lightweight, highly controllable, and easily testable." - OpenAI Swarm README

Integration Ease

For Python developers, Swarm is easy to integrate. It requires Python 3.10+, an OpenAI API key, and the OpenAI Python SDK v1. Thanks to its use of plain Python functions and clear docstrings, setup involves minimal boilerplate. However, Swarm does not retain session memory or conversation history, leaving developers responsible for managing state persistence and message logs.

To enhance safety, developers can use the execute_tools=False flag, which introduces approval gates requiring human confirmation before execution continues. This makes Swarm particularly suitable for prototyping multi-agent systems, though enterprise teams will need to incorporate external databases for storing conversations and audit logs.

Scalability

Swarm’s client-side design reduces runtime overhead, as it doesn’t rely on server-side infrastructure. However, its stateless nature requires developers to implement safeguards, such as limiting the number of turns, setting tool allowlists, and incorporating human-in-the-loop approval gates to prevent excessive API usage. While Swarm is excellent for prototyping, it’s less equipped for large-scale deployments compared to the OpenAI Agents SDK, which includes built-in features like tracing, guardrails, and session management.

Pricing

Swarm is free and open-source under the MIT License, with no additional framework fees. Costs are limited to OpenAI API usage (charged per token) and any infrastructure needed for state management. Its low infrastructure requirements contribute to a cost efficiency rating of 9/10, making it an economical choice for small to medium-sized projects.

Strengths and Weaknesses

This section provides a summary of the key advantages and drawbacks of each framework discussed earlier. Each one stands out in its own way but also comes with trade-offs.

LangGraph offers unmatched control for managing complex workflows, thanks to its graph-based design. It boasts an impressive 91% task completion rate for sequential tool usage scenarios. However, this level of control comes at a cost - it has the steepest learning curve and the longest median debugging time, clocking in at 47 minutes.

CrewAI, on the other hand, shines in rapid prototyping, as evidenced by its 60% adoption rate among Fortune 500 companies. Yet, it struggles with "context bloat", which leads to a drop in task completion rates - from 84% at five steps to just 61% at 12 steps.

Microsoft AutoGen performs exceptionally well in adaptive, conversational scenarios, achieving an 88% completion rate. However, it lacks the deterministic control needed for more structured tasks. Similarly, Microsoft Semantic Kernel is built for enterprise-level scalability, particularly for .NET and Java environments. But this scalability comes with complexity challenges, making it harder to implement.

LlamaIndex excels in Retrieval-Augmented Generation (RAG) applications, supporting over 160 data connectors and processing more than 500 million documents. However, it is less suited for tasks involving autonomous agent logic.

The OpenAI Agents SDK is tightly integrated with OpenAI's ecosystem, achieving a 91% completion rate and a relatively low median debugging time of 22 minutes. However, its reliance on OpenAI's platform introduces vendor lock-in. Notably, organizations using specialized agent frameworks report a 55% reduction in per-agent costs compared to relying solely on platform-based solutions, highlighting a crucial cost-saving factor.

Here’s a quick comparison of the frameworks based on core criteria:

| Framework | Multi-Agent Capabilities | Persistence | Integration Ease | Scalability | Pricing |

|---|---|---|---|---|---|

| LangGraph | High (Graph-based state) | Built-in Checkpointing | Difficult (Steep curve) | High (Low latency) | Free (OSS) |

| LlamaIndex | Moderate (Query-focused) | Data Indexing | Moderate | High (Data retrieval) | Free (OSS) |

| CrewAI | High (Role-based) | Built-in Memory | Easy (Intuitive) | Moderate (Context bloat) | Free (OSS) |

| Semantic Kernel | High (Enterprise) | Azure Integration | Difficult (Enterprise) | High (Production) | Free (OSS) |

| AutoGen | High (Conversational) | Conversation Logs | Moderate | High (Adaptive) | Free (OSS) |

| OpenAI Agents SDK | High (Native Handoffs) | Platform-managed | Easy (OpenAI-native) | High (Managed) | Usage-based |

This table offers a concise overview of each framework's strengths and limitations, helping users evaluate which one aligns best with their needs.

Conclusion

Choose an AI integration framework that aligns with your workflow and team strengths. LangGraph is a top choice for managing complex, stateful processes where precise control over loops and branches is essential. It’s particularly well-suited for production-grade automation that may require pauses for human input. For projects centered on connecting LLMs to large document repositories or internal databases, LlamaIndex shines with its strong data connectivity and optimized RAG (retrieval-augmented generation) features.

Each framework caters to specific needs. If speed and simplicity in deployment are your priorities, CrewAI offers an intuitive solution, especially effective for marketing automation and content workflows. On the other hand, Microsoft AutoGen is ideal for conversational AI requiring human oversight, while Semantic Kernel remains a go-to for enterprises operating in .NET and Java environments.

"Frameworks are where innovation happens. Platforms are where deployment happens. The best teams use both".

This quote reflects the current AI landscape, where 68% of production AI agents are built on open-source frameworks. Financially, organizations leveraging dedicated agent frameworks report 55% lower per-agent costs, despite the 2.3x higher initial setup time compared to platform-only solutions. With the global AI market forecasted to hit $900 billion by 2026 and over 33% of enterprise applications expected to integrate AI agents by 2028, making the right framework choice now is critical for long-term success.

To move forward, focus on targeted prototyping. Start with a specific use case that aligns with your team’s expertise - software engineers may prefer LangGraph, while product teams often lean toward CrewAI. Additionally, invest in observability tools like LangSmith or Langfuse from the beginning; their minimal cost can save countless hours of debugging during production.

FAQs

Which framework should I start with for my first AI agent?

For those just starting out, it’s best to choose a framework that's easy to use, adaptable, and comes with strong community support. AutoGPT works well for handling autonomous tasks and has a manageable learning curve. If you're looking to streamline multi-agent workflows, CrewAI makes the process simpler. For those who want more control and flexibility, LangChain provides a modular setup with a broader ecosystem, though it does require some prior experience. Beginners can start with AutoGPT or CrewAI, then transition to LangChain as their skills develop.

How do these frameworks handle state and crash recovery in production?

Durable workflow engines, like Temporal, are designed to handle long-running workflows with a focus on strong execution guarantees and built-in crash recovery. This ensures that processes remain reliable even in the face of unexpected failures. Similarly, tools like CrewAI and LangGraph come equipped with features such as task management, state handling, and mechanisms for retries and recoveries. These capabilities are essential for safeguarding system integrity, minimizing the risk of data loss, and ensuring smooth recovery in production environments.

What’s the fastest way to add tool use and guardrails without over-engineering?

The fastest way to get started is by using AI integration frameworks that come with built-in tools for management and governance. Platforms like LangChain or AutoGen are designed for this purpose, offering features like rapid prototyping, modular setups, and role-based workflows. These capabilities make it easier to set up guardrails - like predefined roles and governance policies - right from the beginning. This approach helps you maintain a balance between speed and control, without adding unnecessary layers of complexity.