Walk into any classroom or office today, and talk of artificial intelligence is never far behind. Chatbots answer customer questions, algorithms suggest movie plots, and smart tools can spin essays in seconds.

At first glance, the gap between AI and human writing seems to shrink every month.

After all, if a machine can draft a sales letter in less time than it takes a person to sip coffee, why bother learning to structure a paragraph?

ALSO READ: 10+ Best AI Tools for eCommerce Businesses (2025 Guide)

Some students even whisper, “There are so many ways to write my paper and move on!”

While having a professional service at your side can be a good idea, a simple AI copy can set you up.

The question sounds playful, yet it points to a serious debate about the future of creativity.

Machines generate text by the gigabyte, but can they really match the spark that makes a reader laugh, cry, or suddenly see the world in a fresh light?

This article explores that puzzle, weighing machines’ growing power against the timeless flair of human imagination.

Ask writers, painters, or songwriters where their best ideas come from, and most will shrug before telling a personal story.

Human creativity sprouts from lived feelings, chance memories, and cultural moments that hit the heart, not just the head.

Unlike code, people grow up hearing lullabies, tasting birthday cake, and trading jokes with friends during storms.

Those layers of sensory experience feed a stew of images that can bubble up years later in a poem or comic strip.

Neuroscientists say the brain crosses distant thoughts in a way no current algorithm copies: it mixes smell with color, sorrow with hope, summer with the sound of rain on a tin roof.

This messy, often illogical mash-up is why a single metaphor can make a reader gasp.

While language models capture patterns, they do not feel the pattern.

That difference fuels the ongoing AI creativity vs human creativity debate, reminding observers that emotion and context are more than data points.

Modern language models, from GPT to open-source clones, do not think in sentences.

They predict the next word based on billions of examples scraped from books, blogs, and tweets.

Statistically, that trick works so well that the output can look like reasoning. Yet under the hood, the system weighs tokens, not meaning.

To many readers, this feels magical; to a data scientist, it is a huge probability table.

When comparing AI vs humans in writing speed, machines consistently outperform humans.

A prompt as small as a sentence can yield a full article before a human finishes outlining.

But speed hides weaknesses. The model cannot determine whether a joke is effective or if a paragraph is repetitive.

Because it lacks lived memories, it leans heavily on averages, sometimes leading to bland clichés.

The algorithm also mirrors biases soaked into its training data. So while the surface shines, the core remains a reflection, not an originator, of creative thought.

While headlines love an AI vs human showdown, the most exciting work may live in the middle ground where writers team up with smart tools.

Think of the designer who feeds a mood board into a text-to-image model, then adjusts the output with a paintbrush.

Or the novelist who lets a chatbot brainstorm character names, yet still chooses the final arcs by hand.

In these blended studios, AI and human creativity form a loop: the machine suggests, the person curates, then both repeat the cycle.

Studies from journalism labs show that writers who co-create with AI draft faster first versions and spend more time on nuance during revisions.

The technology acts like a tireless intern, offering possibilities that might not surface on a sleepy afternoon.

Still, the human keeps the steering wheel, deciding which idea sings and which belongs in the trash bin.

Collaboration, not competition, may unlock the richest stories.

Every language model is only as broad as its dataset.

If no one on the internet has written about a hidden mountain village in the same style as a jazz lyric, the algorithm cannot invent that fusion on command.

It samples patterns that already exist.

Humans, by contrast, can mash experiences that never share a sentence online.

A traveler may recall a flute melody heard in Peru and blend it with a headline about space travel to craft a fresh metaphor.

That leap from internal memory to outward art defines the edge in the AI vs human creativity race.

Machines also struggle with long-term narrative arcs. Ask a model to pen a novel, and subplots may vanish or contradict by chapter five.

Readers sense the hollowness even if the prose is smooth. Until systems track characters the way people track friends, their imaginative scope will stay boxed by statistics, not by genuine discovery.

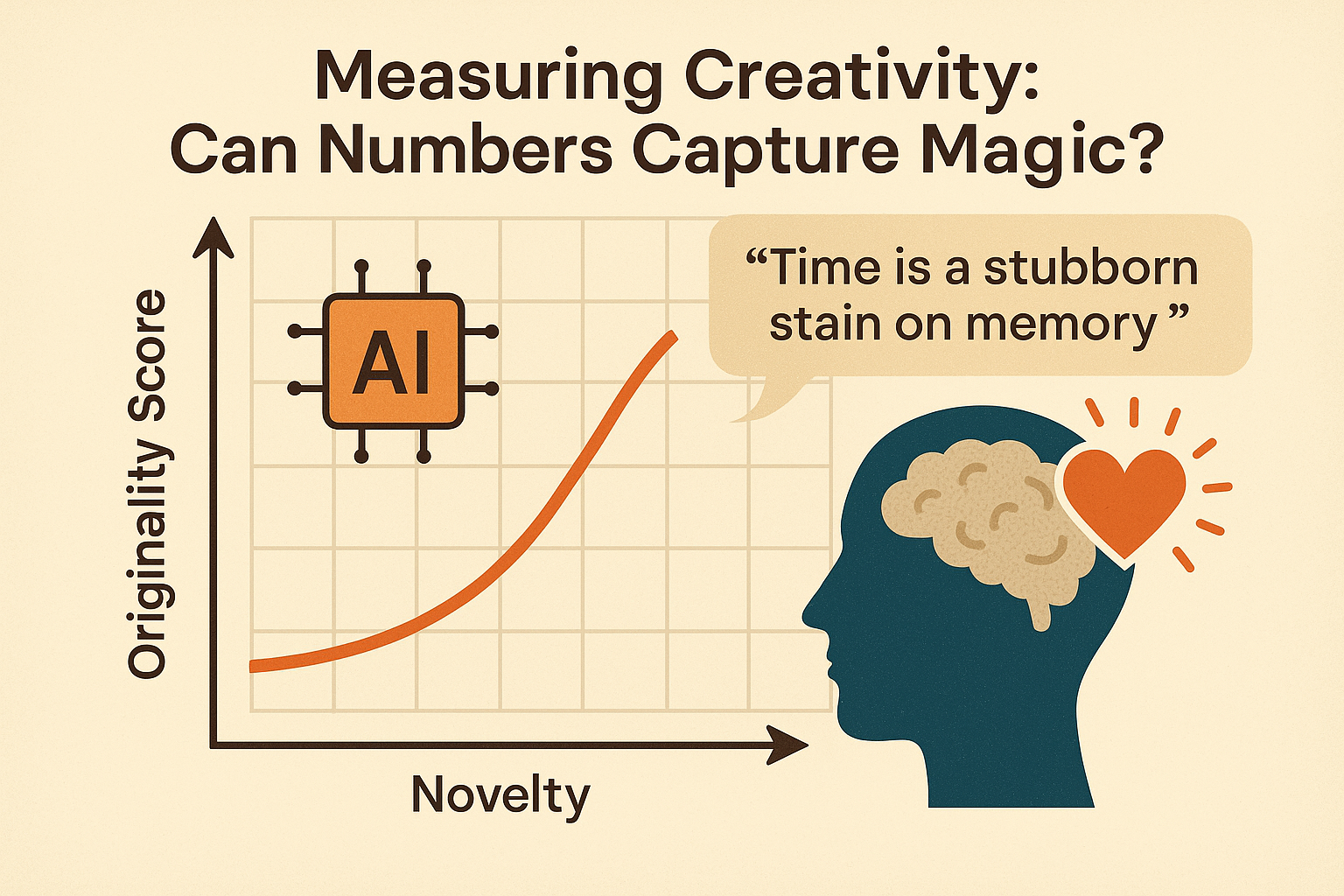

Researchers seeking hard evidence have developed tests that assess novelty, coherence, and emotional impact.

One popular metric, called Originality Score, checks how often certain word pairs appear online. If the combination is rare, the sentence earns points.

On paper, these rules allow for clean graphs comparing AI creativity vs human creativity. In practice, they miss the goosebump factor that makes a line unforgettable.

A sentence such as “Time is a stubborn stain on memory” might score low because each word is common.

Yet readers may underline it in a diary. Conversely, a model can string exotic phrases together and still feel empty.

Numbers help spot progress, of course; modern chatbots outperform earlier versions by wide margins.

Yet even top-ranked outputs rarely trigger the full-body reaction that comes from seeing oneself reflected in art.

Until algorithms can surprise both the chart and the heart, metrics will stay informative but incomplete.

For now, they function like speedometers on a winding road—helpful, but incapable of describing the scenery flying past the window.

Storytelling highlights the clearest gap between human and AI authors. In 2022, a tech magazine asked both a novelist and a chatbot to craft a short mystery.

The machine produced tight sentences and a logical plot twist, yet reviewers noted cardboard emotions.

The human version used fewer fancy adjectives but drew readers into the detective’s lonely doubts.

Poetry reveals similar patterns.

Algorithmic haiku often follows the 5-7-5 rule perfectly, yet relies on generic nature imagery. Meanwhile, a human poet may break the syllable count to land an unexpected punch about an abusive childhood.

Humor pushes the divide further. Jokes depend on shared culture and timing.

When an AI attempts a stand-up, it sometimes repeats memes that have felt stale for years.

These examples show that competence does not equal charisma.

Machines can check genre boxes, but the spark that turns pages or elicits belly laughs still leans toward the living author today.

Beyond style, the AI vs humans debate carries weighty ethical questions.

If a marketing firm replaces ten copywriters with one prompt engineer, what happens to the voices that once shaped brand stories? Job loss is the obvious fear, but so is homogenization.

When most campaigns arise from the same corporate language model, regional quirks may fade.

Copyright law also lags behind. A human draws inspiration from Shakespeare and is praised; an AI trained on the same texts may be accused of plagiarism even when no line is copied verbatim.

Transparency adds another layer. Readers deserve to know whether the heartfelt letter from a charity came from a volunteer or a server farm.

Finally, accountability matters.

When a chatbot invents facts about medical treatments, who holds the blame?

The programmer, the user, or the investors?

Ethical guardrails must evolve as fast as the code, or trust in digital communication will erode.

Educators are already rethinking lesson plans to prepare students for a blended world of AI and human creativity.

Instead of banning chatbots, some teachers encourage learners to critique machine-generated drafts.

A middle-school class in Texas compares AI summaries with the original texts, highlighting missing context and bias.

The exercise trains critical reading while demonstrating why personal insight still matters.

Coding clubs run the reverse experiment: kids build tiny language models and see firsthand how limited a result appears when data is small.

Such projects demystify the black box and foster humility.

Pedagogues also stress meta-skills—curiosity, empathy, and interdisciplinary thinking—that make human writers unique.

By treating AI as a collaborator to question rather than a cheat sheet to exploit, schools hope to cultivate creators who can steer technology toward richer expression.

The goal is not to crown a winner but to spark lifelong dialogue between tool and thinker for generations to come.

Predicting the endpoint of the AI vs human creativity journey is impossible, but certain trends stand out.

Machines will continue to improve in structure, grammar, and mimicry; humans will continue to own intuition, emotion, and moral judgment.

The frontier, therefore, is less about replacement and more about orchestration.

In the same way calculators freed mathematicians to think about higher problems, language models may free authors to explore deeper themes.

Yet vigilance is essential. Society must demand transparency, protect diverse voices, and invest in tools that amplify rather than flatten originality.

Readers, too, have power: every click signals what stories get reproduced.

By choosing nuanced, heartfelt works, audiences encourage platforms to value personal vision.

Ultimately, the contest may resolve into a duet, not a duel, where AI vs human talents blend to paint narratives no single entity could achieve alone.

That possibility is both hopeful and firmly in human hands for now.