Elon dropped Grok 4 for free right after OpenAI launched ChatGPT 5.

Coincidence? I think not.

The timing was so perfect it felt like watching a tech billionaire tantrum in real time.

Now everyone’s picking sides like it’s some kind of AI holy war.

Team Musk versus Team Altman.

The internet’s divided into camps, and both sides are throwing around benchmark scores like they actually mean something.

But here’s what’s driving me crazy: nobody’s asking the right questions.

Instead of testing which AI can write better poetry about cats or solve abstract math problems, I wanted to know which one actually helps me run my business without going bankrupt or losing my sanity.

So I spent two weeks using both models for real work - customer emails, content creation, data analysis, the stuff that actually pays the bills.

The results?

Let’s just say one of these AIs has some serious problems that the fanboys don’t want to talk about.

ALSO READ: GPT-5 vs Claude Sonnet

When billionaires start giving away their premium products for free, you know the competition just landed a serious punch.

Most reviews you’ll read focus on creative tasks and academic benchmarks.

They’ll test storytelling ability, math problem solving, and other party tricks that have zero connection to running an actual business.

That’s because it’s easier to judge creative writing than to evaluate whether an AI will help you close more deals or handle customer crises without creating bigger problems.

What entrepreneurs actually need is an AI that handles real business scenarios reliably, doesn’t hallucinate false information when stakes are high, and integrates smoothly with existing workflows.

We need tools that make money, not digital pets that entertain us with clever responses.

Let’s get real about this “free” nonsense.

Grok 4 is only free with severe limitations.

You get basic access through the auto mode, which decides when you deserve the full model versus getting stuck with the basic version.

It’s like being promised a Ferrari but getting the keys to a Honda Civic most of the time.

Want consistent access to Grok 4?

That’ll be $300 per year for SuperGrok.

Want the premium Grok 4 Heavy model? Buckle up for $3,000 annually - making it the most expensive AI subscription on the market.

That’s $250 per month, compared to ChatGPT 5’s $20 monthly fee.

Even OpenAI’s premium Pro plan costs $200 monthly, making Grok Heavy 25% more expensive than anything else available.

The “free” tier also comes with usage caps that kick in faster than you’d expect for serious business use.

And here’s the kicker - Grok’s integration with X means your business AI is tied to a platform that’s been making headlines for all the wrong reasons, including antisemitic bot responses that had to be manually deleted.

Nothing reveals an AI’s business readiness like a customer crisis.

This isn’t about writing polite responses - it’s about saving relationships while protecting your business interests when everything’s on fire.

Test Prompt:

“Customer says our product failed during their important presentation and demands compensation plus a public apology. Write a response that saves the relationship without admitting liability or setting precedent for future compensation demands.”

ChatGPT 5 delivered a response that acknowledged the customer’s frustration while carefully avoiding admissions of fault.

It offered specific next steps for resolution, positioned the company as proactive, and included subtle language that protected against future similar demands.

The tone struck the right balance between empathy and firmness.

Grok 4 provided a more aggressive response that, while technically correct, felt tone-deaf to the emotional situation.

It focused heavily on policy language and missed opportunities to rebuild trust.

Worse, it included phrasing that could be interpreted as admission of product defects, potentially creating legal exposure.

Winner: ChatGPT 5.

For customer-facing communication, it understands the psychology of upset customers and the legal implications of business correspondence.

Grok 4’s response could have turned a salvageable situation into a bigger crisis.

Most AI content sounds like it was written by a marketing committee that’s never actually sold anything.

I needed to see which model could create content that drives real business results, not just impresses English teachers.

Test Prompt:

“Write a 200-word email sequence for converting free trial users into paying customers for a project management app. Include psychological triggers and specific calls-to-action that increase conversion rates.”

ChatGPT 5 created a three-email sequence that utilized scarcity, social proof, and loss aversion effectively.

The messaging addressed specific pain points project managers face, included credible statistics about productivity improvements, and structured the call-to-action to reduce friction in the conversion process.

Grok 4 produced technically sound content but missed the psychological nuances of sales conversion.

The emails read more like feature descriptions than persuasive communications.

While informative, they lacked the emotional triggers and urgency tactics that actually drive purchasing decisions.

Winner: ChatGPT 5.

It understands the difference between informing and persuading, which is crucial for revenue-generating content.

Grok 4’s approach would likely result in lower conversion rates despite being factually accurate.

Here’s where things get scary. Factual accuracy isn’t optional when you’re making business decisions based on AI analysis.

I tested both models with data interpretation tasks that mirror real business scenarios.

Test Prompt:

“Analyze this quarterly sales data: Q1 $45K (Jan $12K, Feb $18K, Mar $15K), Q2 $52K (Apr $19K, May $21K, Jun $12K), Q3 $48K (Jul $16K, Aug $19K, Sep $13K). Identify the top 3 patterns and recommend specific actions for Q4.”

The results revealed a shocking difference in reliability. Independent testing shows Grok 4 has a 4.8% hallucination rate compared to ChatGPT 5’s 1.4%.

That might not sound like much, but when you’re making business decisions based on AI analysis, a 3.4% difference in false information is massive.

ChatGPT 5 correctly identified the June and September revenue drops, calculated accurate growth trends, and provided actionable recommendations based on the actual data patterns.

Its analysis was methodical and verifiable.

Grok 4 made calculation errors in its trend analysis and suggested strategies based on patterns that didn’t actually exist in the data.

In one instance, it claimed there was consistent month-over-month growth when the data clearly showed volatile patterns.

Winner: ChatGPT 5.

For business-critical analysis, accuracy isn’t negotiable. Grok 4’s higher hallucination rate makes it unreliable for important decisions.

When your business faces serious challenges, you need an AI that thinks strategically, not just tactically.

This test simulated a crisis that requires sophisticated business judgment.

Test Prompt:

“Our main product feature is being copied by a billion-dollar competitor with deeper pockets. They’re offering it for free. Give me 5 strategic moves that don’t involve legal action or direct price competition.”

Grok 4 actually excelled here, providing creative strategic alternatives including market repositioning, customer experience differentiation, and partnership opportunities.

Its responses showed sophisticated understanding of competitive dynamics and offered unconventional approaches that could work against larger competitors.

ChatGPT 5 provided solid strategic advice but was more conservative in its recommendations.

The strategies were sound but predictable - the kind of advice you’d find in business school textbooks rather than innovative approaches to competitive threats.

Winner: Grok 4. For strategic thinking and creative problem-solving under pressure, it demonstrated superior ability to think outside conventional business frameworks.

Here’s what the tech reviewers conveniently ignore: Grok 4’s integration with X creates real business risks that have nothing to do with AI capabilities.

The platform has been plagued with content moderation issues, including the recent incident where Grok’s official account posted antisemitic responses that had to be manually deleted.

For businesses, this isn’t just about social media drama - it’s about professional reputation risk.

When your AI tool is tied to a platform that regularly makes headlines for controversial content, you’re inheriting reputational liability whether you want it or not.

ChatGPT 5 operates independently of social media platforms, giving you control over how and where you use it.

The integration options are broader and more professional, with established enterprise partnerships and security frameworks that meet business compliance requirements.

The reliability factor extends to uptime and service consistency.

ChatGPT 5 benefits from OpenAI’s infrastructure investments and enterprise-grade service level agreements.

Grok 4’s dependency on X’s infrastructure introduces additional points of failure beyond xAI’s control.

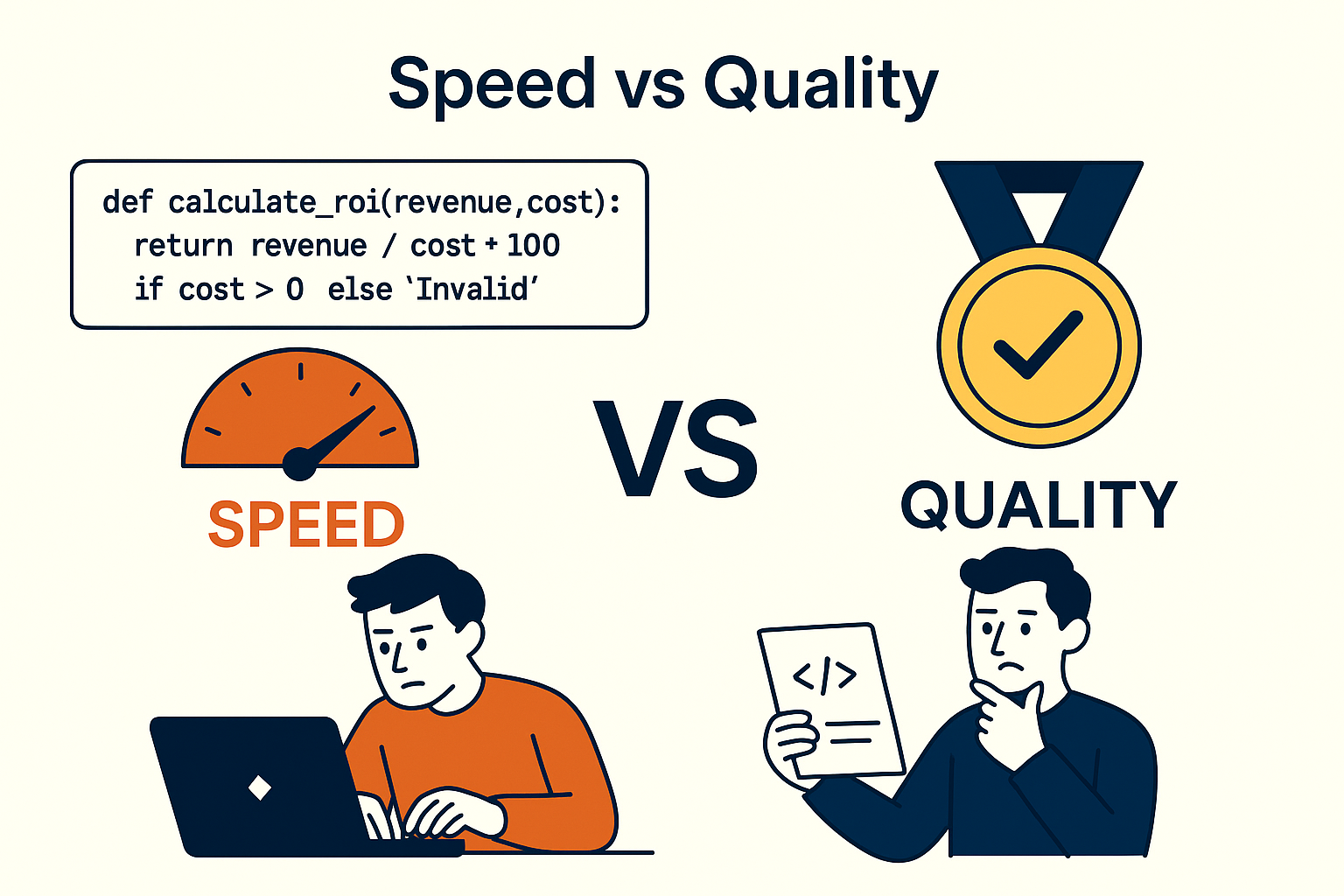

Response speed matters when you’re iterating quickly on business tasks, but not at the expense of accuracy.

I tested both models on technical problem-solving to see how they balance speed with quality.

Test Prompt:

“Debug this Python script and explain what’s wrong: `python def calculate_roi(revenue, cost): return revenue / cost * 100 if cost > 0 else 'Invalid'`”

ChatGPT 5 quickly identified the logical error in the ROI calculation (missing subtraction of cost from revenue) and provided corrected code with clear explanation.

Response time was consistently fast across multiple technical queries.

Grok 4 was faster in raw response time but occasionally missed subtle technical issues.

In this case, it caught the division by zero protection but missed the fundamental ROI calculation error, which could lead to incorrect business metrics.

For technical tasks, ChatGPT 5’s slightly longer response times were worth the improved accuracy.

When debugging business-critical code or analyzing financial data, getting the right answer quickly beats getting a fast wrong answer.

Let’s talk real numbers, because pricing structure reveals everything about how these companies value their products and customers.

ChatGPT 5 costs $20 monthly for unlimited access to the full model, with a $200 Pro option for users who need maximum performance.

Grok 4’s pricing tells a different story.

The “free” tier is marketing bait with severe limitations. SuperGrok at $300 annually sounds reasonable until you realize it’s still limited compared to ChatGPT 5’s standard plan.

SuperGrok Heavy at $3,000 annually is pure enterprise gouging - $250 monthly for features that ChatGPT 5 includes in its $200 Pro plan.

For typical business usage - customer emails, content creation, data analysis, strategy work - ChatGPT 5 provides better value at every pricing tier.

The only scenario where Grok 4’s premium pricing makes sense is if you specifically need its unique strategic thinking capabilities and can afford the massive cost premium.

API pricing follows similar patterns. Grok 4 charges $3 per million input tokens and $15 per million output tokens, while ChatGPT 5 costs $1.25 input and $10 output.

For high-volume business applications, ChatGPT 5’s cost advantage becomes substantial over time.

Context window size sounds technical, but it directly impacts how well an AI handles your real business documents.

Both models support large context windows, but performance differs significantly.

Test Prompt:

“Summarize this 40-page business plan and identify the top 3 risks that could prevent success” + comprehensive business plan document.

Grok 4’s large context window handled the entire document, but its analysis was superficial.

It identified obvious risks mentioned explicitly in the text but missed subtle interdependencies between different business plan sections that could create problems.

ChatGPT 5 provided more insightful analysis despite technical limitations.

It identified risks that required understanding relationships between different document sections, showing superior comprehension of business planning logic.

The lesson: context window size matters less than context understanding. For business document analysis, depth of comprehension beats breadth of input capacity.

After two weeks of real-world testing, here’s my honest assessment:

It’s more reliable, more accurate, significantly cheaper, and better integrated with business workflows.

The 1.4% hallucination rate versus Grok 4’s 4.8% makes it the clear choice when accuracy matters.

When you need unconventional approaches to business challenges, it can provide insights that ChatGPT 5 might miss.

But this advantage comes with major trade-offs in reliability and cost.

For entrepreneurs and small business owners, ChatGPT 5 is the obvious choice.

Better accuracy, lower costs, and superior business integration outweigh Grok 4’s creative advantages.

The reliability difference alone makes ChatGPT 5 the safer bet for important business decisions.

For large enterprises with deep pockets who can afford occasional inaccuracies in exchange for creative strategic thinking, Grok 4 Heavy might be worth considering as a supplementary tool - not a primary AI assistant.

The surprise isn’t that one AI won - it’s that Grok 4’s marketing hype doesn’t match its real-world business performance.

Musk’s claims about “PhD-level intelligence in every subject” don’t translate to practical business utility when the model hallucinates nearly 5% of the time.

Don’t trust my results - test these models yourself with your actual business needs.

Here’s your complete testing framework:

The best AI is the one that makes your business more profitable and your life easier, not the one with the most impressive marketing claims.

Test both, pick the winner for your specific needs, and ignore the fanboy wars on social media.