Introduction and Basics

As we all of us have seen, AI evolves rapidly, OpenAI’s GPT models have been game-changers. GPT-3.5, also known as GPT-3.5 Turbo, was primarily designed for conversational tasks and could mimic human-like responses. On the other hand, GPT-4 is a more advanced version, supposedly being 10 times more capable than its predecessor. It not only understands context and nuances better but also has a significantly higher token limit, allowing for more extended and coherent responses. In this article we will compare both and will test them with a practical example.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

Key Differences and Capabilities

When it comes to linguistic abilities, GPT-4 surpasses GPT-3.5 by understanding and generating different dialects and emotional responses. It also is better in synthesizing information from multiple sources to answer complex questions, a feature where GPT-3.5 often falls short. In terms of creativity and coherence, GPT-4 can produce well-structured stories, poems, or essays, unlike GPT-3.5, which struggles with consistency. GPT-4 also demonstrates much better problem-solving skills, especially in complex mathematical and scientific domains. Its programming capabilities are more advanced, making it a valuable resource for software developers. Unlike GPT-3.5, which is text-centric, GPT-4 can even analyze and comment on images and graphics.

Furthermore, GPT-4 has implemented ethical mechanisms to minimize undesirable outputs, making it a more reliable and responsible AI model. Even though GPT3.5 is free, access to GPT-4 is available through a subscription to ChatGPT Plus, which costs $20 a month. This paid service is generally considered to offer more accurate and reliable answers compared to the free version of GPT-3.5, but one downside is that there is a message limit that the user can send, 50 messages per 3 hours. Here therefore, using good quality prompts will be cost and time effective with less waste, we suggest god of prompt prompts.

In summary, while both GPT-3.5 and GPT-4 have their merits, GPT-4 obviously stands out in various aspects, from understanding language nuances to solving complex problems. As AI technology continues to advance, these models are likely to offer even more functionalities and applications. For now, let’s see the differences between them in a practical test.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

Understanding the Basics

OpenAI has revamped its pricing model with the introduction of these new models, creating a diverse landscape of choices for developers. While GPT-3.5-turbo now boasts a longer context window of 16,000 tokens, it also comes with a slightly higher price tag ($0.003 per 1,000 input tokens and $0.004 per 1,000 output tokens). Conversely, the original GPT-3.5-turbo has seen a 25% price reduction, making it more affordable at $0.0015 per 1,000 input tokens and $0.002 per 1,000 output tokens.

Function Calling: Bridging Natural Language and Code

One of the pivotal upgrades in both models is the introduction of function calling. This feature enables developers to instruct the AI models to execute specific programming functions, effectively bridging the gap between natural language instructions and code execution. This functionality opens the door to creating advanced chatbots, transforming natural language into database queries, and extracting structured data from text.

Both GPT-3.5-turbo and GPT-4 have undergone fine-tuning to understand when and how to call a function, ensuring more reliable and structured data outputs. However, the degree of sophistication differs between the two models.

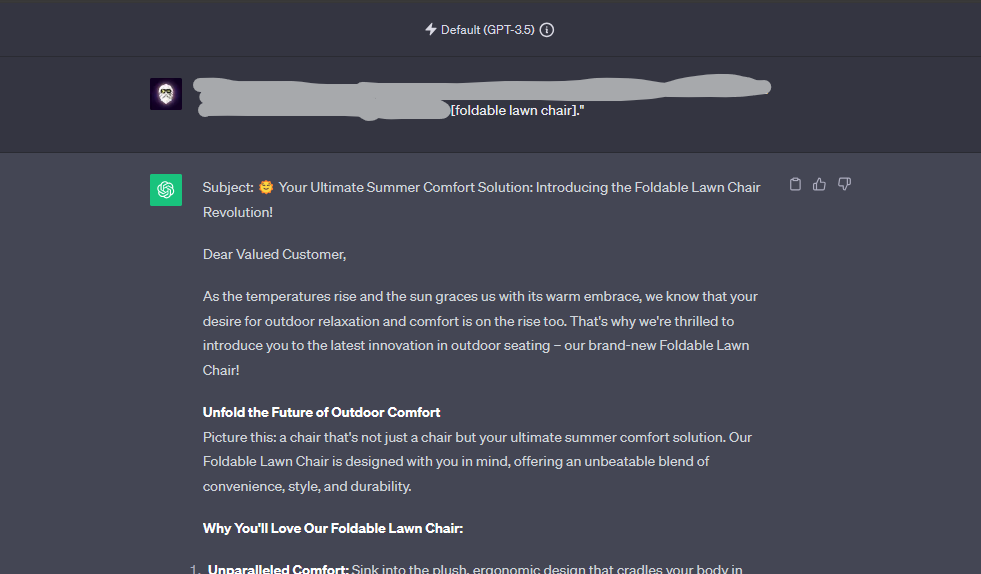

The Experiment: A Test of Quality and Detectability

The Setup

An experiment compared GPT-3.5 and GPT-4. Both were asked to write a sales email for an imaginary product (foldable lawn chair) using the God of Prompt marketing pack. Then, we checked how detectable the content was using gptzero.me.

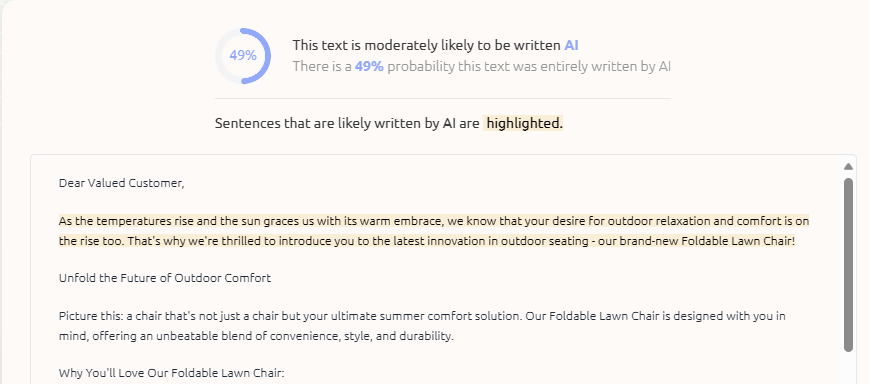

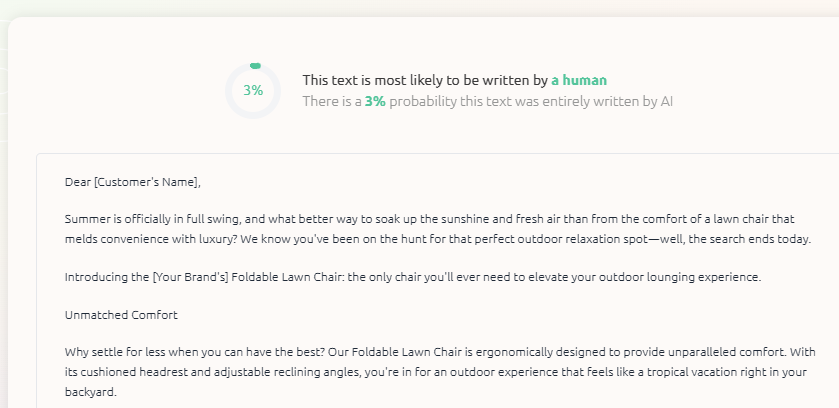

The Results

GPT-3.5 wrote a decent post but was simple. It scored quite high when it comes to AI detection though, a whopping 49%.

GPT-4 wrote a better, more engaging post and also scored 3% when it comes to AI detection making it much more human sounding.

Results speak for themselves: GPT-3.5 was faster but lower in quality, while GPT-4 took longer. Another interesting thing to note here is that people who are used to reading through and working with AI language models have an eye for detecting AI writing, but this will require a different experiment as most stakeholders that do not have much experience rather use detection tools like we did.

For those in the fields of marketing, brand identity, and content creation, these findings are very useful. GPT-4’s ability to generate high-quality, undetectable content means less time spent on refining and more quality output from inserted prompts. The model’s understanding of context and its ability to produce nuanced, engaging content could be a game-changer. In other words: get better results with the same prompts.

Conclusion

The experiment shows GPT-4 is far ahead of GPT-3.5 in quality and stealth. While GPT-3.5 is quicker, GPT-4 offers better content and is harder to detect as AI-generated. GPT-4’s capabilities extend to a wide range of applications, from automating emails to more complex tasks. If you’re looking to use AI, GPT-4 is the smarter choice, offering a glimpse into the future of AI-driven content. If, on the other hand, you do not want to pay OpenAi for a subscription to GPTplus or have used up your daily limit of messages, you will still get good results but it would require you to tweak the output text to sound more human.

In any case, our prompts work perfectly with both, the key difference being that with GPT3.5 you would need to tweak the text a bit.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

Extended Context Window: Enhancing Coherence

The context window in GPT-3.5-turbo has expanded significantly, quadrupling its length to an impressive 16,000 tokens. This enhancement enhances the AI model’s ability to recall and reference previous parts of a conversation. The result is more coherent and relevant AI responses, reducing the likelihood of the AI drifting off-topic.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

Optimizing Usage Based on Pricing

To harness the full potential of these models, developers must strike a balance between performance and cost, especially given the new pricing structure. Here’s a deeper look into the advantages and disadvantages of each model:

GPT-3.5-turbo

Advantages:

– Lower Cost: GPT-3.5-turbo is the budget-friendly option, making it an excellent choice for cost-conscious projects.

– Sufficient for General Applications: For most applications, such as creating simple chatbots or converting natural language into database queries, GPT-3.5-turbo offers satisfactory performance.

– Lower Resource Requirements: This model can operate effectively with fewer computational resources, making it suitable for resource-constrained environments.

Disadvantages:

– Limited Context Window: If your application demands a more extensive context, the regular GPT-3.5-turbo may fall short due to its relatively smaller context window.

– Less Powerful Function Calling: While GPT-3.5-turbo can perform function calling, it lacks the advanced capabilities of GPT-4 in this regard.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

GPT-4

Advantages:

– Enhanced Function Calling: For applications requiring complex function calling, such as developing advanced chatbots, GPT-4 shines due to its improved capabilities in this area.

– Larger Context Window: GPT-4 can process a significantly larger context, making it invaluable for applications that demand extensive historical information.

Disadvantages:

– Higher Cost: GPT-4 comes at a higher price point compared to GPT-3.5-turbo, potentially limiting its suitability for budget-conscious projects.

– Greater Resource Requirements: The increased computational demands of GPT-4 might pose challenges for projects without ample computational resources.

💡 Unlock the #1 Best ChatGPT Prompt Library to automate your work with AI.

Key Takeaways

Choosing between GPT-3.5-turbo and GPT-4 for prompting depends on your specific project needs and constraints:

GPT-3.5-turbo is suitable if you’re working on a budget or with limited resources.

For applications not requiring extensive context memory or complex function calling, GPT-3.5-turbo is a reliable choice.

If your project demands advanced function calling capabilities or a large context window, GPT-4 is the superior option.

If budget and resources are not a constraint and you aim for the most advanced AI model, GPT-4 should be your choice.

In the ever-evolving landscape of AI, selecting the right model is pivotal to achieve your project’s goals efficiently and cost-effectively. Whether you opt for the cost-effective GPT-3.5-turbo or the advanced capabilities of GPT-4, both models offer unprecedented opportunities for innovation and creativity in the world of natural language processing.