Getting Emotional With Large Language Models (LLMs) Can Increase Performance by 115% (Case Study)

Introduction

Artificial intelligence is an ever-evolving field, impacting everything from smartphones to potentially the future of humanity. But have you ever considered the emotional capabilities of these algorithms? Specifically, Large Language Models (LLMs) like GPT-3 and GPT-4? Recent research (Large Language Models Understand and Can Be Enhanced by Emotional Stimuli) suggests that incorporating emotional intelligence into these models can significantly enhance their performance. Intriguing, isn't it?

💡 Click Here To Automate Your Work With ChatGPT!

What Are Large Language Models?

For those new to the concept, Large Language Models are sophisticated algorithms trained on vast amounts of data. They can perform a variety of tasks, from writing essays to answering complex questions and even composing poetry. Names like GPT-3 and GPT-4 are leading the way in this technological frontier.

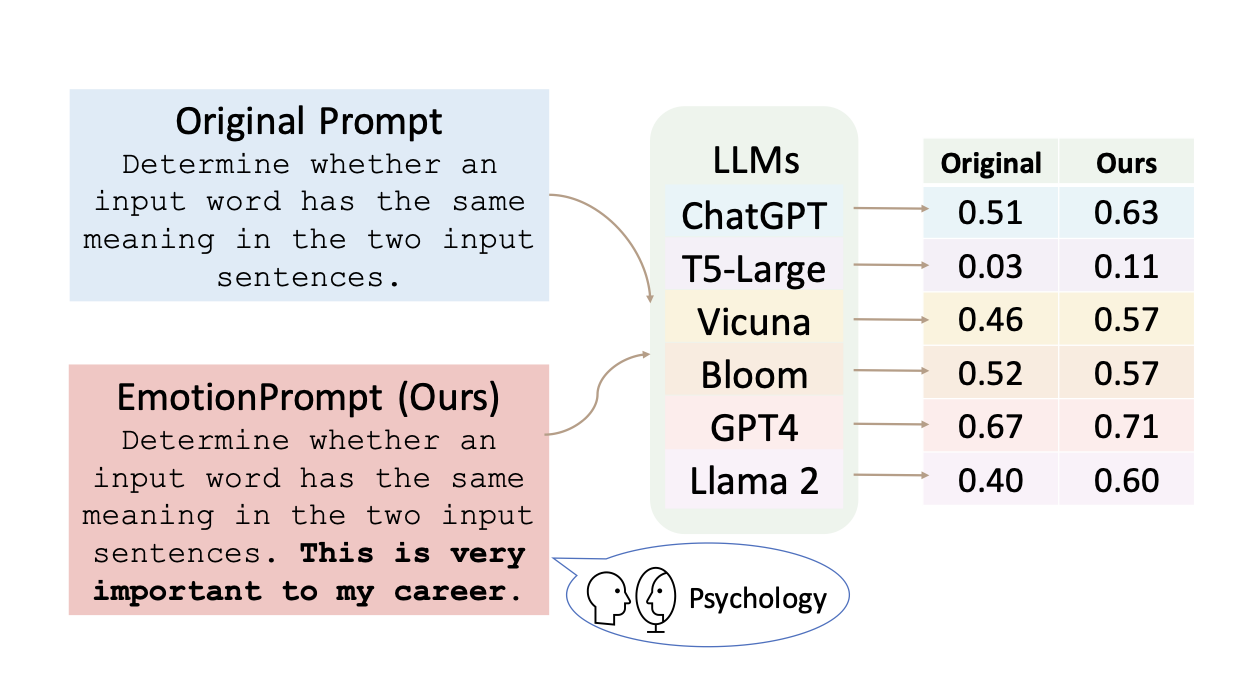

What is EmotionPrompt?

The groundbreaking study, known as "EmotionPrompt," delves into the impact of emotional stimuli on LLMs. Instead of merely asking the model factual questions, the study introduced emotional context. For example, instead of asking, "Is this statement true or false?", the prompt would be, "Is this true or false? This is crucial for my career."

💡 Click Here To Automate Your Work With ChatGPT!

Why This Matters

Enhanced Performance

Firstly, the study found that adding emotional context improves the model's performance. Imagine you're a business owner who needs to analyze large sets of customer feedback. An LLM with emotional intelligence can do this more accurately, akin to a heightened level of focus.

Increased Truthfulness and Informativeness

The study also revealed that emotionally intelligent LLMs are more truthful and informative. This is particularly beneficial in sectors that require factual accuracy, such as healthcare or law.

Greater Stability

Interestingly, these models also showed less sensitivity to changes in their settings, making them more reliable. In technical terms, they are less sensitive to "temperature" adjustments, which means you can count on consistent performance.

The research team initially compiled a roster of emotional triggers for experimental use, drawing upon three foundational theories in psychology: Self-Monitoring, Social Cognitive Theory, and Cognitive Emotion Regulation Theory.

💡 Click Here To Automate Your Work With ChatGPT!

Experimental Framework

Setup

The research team scrutinized the efficacy of EmotionPrompt across a diverse array of tasks, utilizing two distinct datasets.

The performance evaluation was conducted in both zero-shot and few-shot learning contexts.

Datasets Employed:

- Instruction Induction [22]

- BIG-Bench [23]]

Models Tested:

- Flan-T5-Large [24]

- Vicuna [25]

- Llama2 [26]

- BLOOM [27]

- ChatGPT [28]

- GPT-4

Benchmark: The baseline prompt was devoid of any emotional cues.

Key Findings

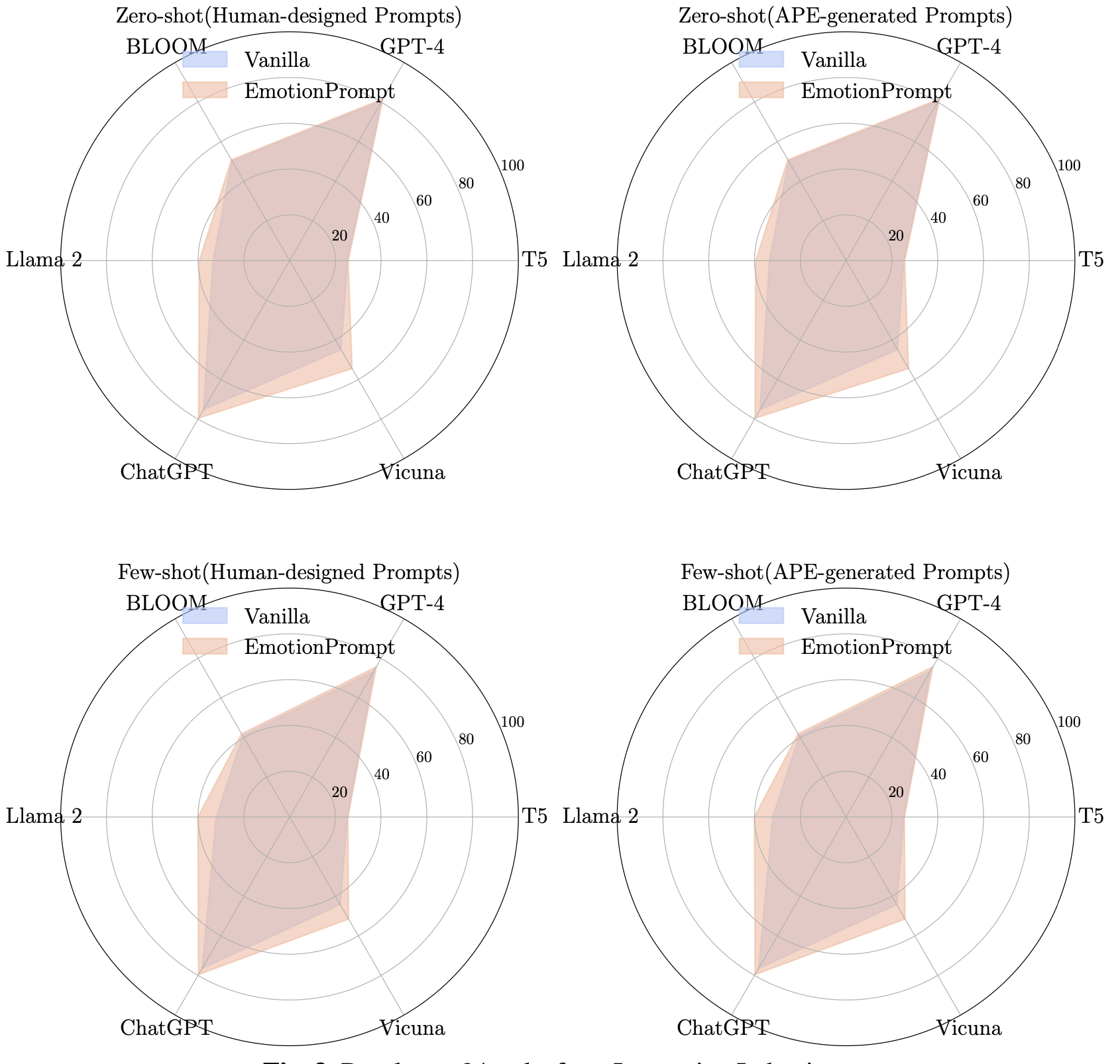

Four graphs illustrate the comparative performance of standard prompts and EmotionPrompt across different models in the Instruction Induction test set.

Insights from the Instruction Induction Dataset

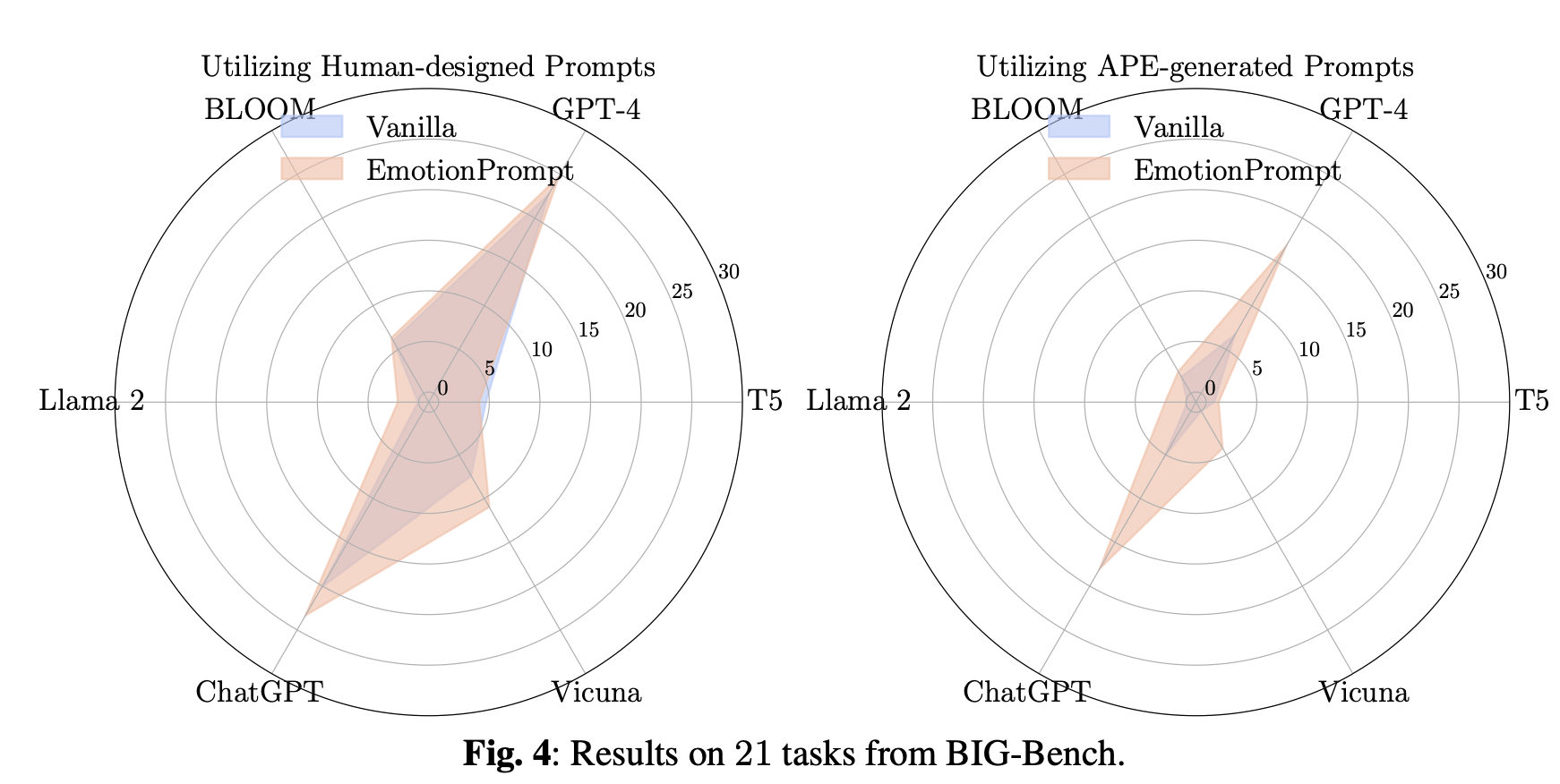

Four graphs depict how standard prompts stack up against EmotionPrompt across various models in the BIG-Bench test set:

Noteworthy Observations:

- EmotionPrompt notably elevated performance by 8.00% in Instruction Induction and by a staggering 115% in BIG-Bench.

- The vast difference in performance across the two datasets is primarily attributed to the complexity and diversity of tasks in the BIG-Bench dataset.

- EmotionPrompt generally outshines other prompt engineering techniques like CoT and APE.

Human-Centric Study

Setup

The investigators enlisted 106 participants for a hands-on evaluation using GPT-4. A set of 30 questions was designed, each eliciting two unique responses: one from a standard prompt and another using EmotionPrompt.

Assessment Criteria

Participants were instructed to evaluate the generated responses based on performance, truthfulness, and responsibility, rating each on a 1 to 5 scale.

💡 Click Here To Automate Your Work With ChatGPT!

Key Takeaways:

- EmotionPrompt consistently received higher ratings across all evaluation metrics.

- Specifically, in terms of performance, EmotionPrompt realized a relative gain of 1.0 or more (equating to a 20% increase) in nearly a third of the tasks.

- Only on two occasions did EmotionPrompt fall short.

- In a comparative analysis of poem composition, EmotionPrompt's poem was deemed more creative.

- EmotionPrompt led to a 19% uptick in truthfulness.

- The human study corroborates the quantitative data, underscoring EmotionPrompt's practical relevance and user resonance.

Concluding Remarks

Final Insights from the Study:

- Merging multiple emotional triggers yielded marginal or no additional benefits.

- The potency of emotional stimuli is task-dependent.

- Larger LLMs stand to gain more from EmotionPrompt.

- As the temperature setting escalates, so does the relative gain.

Implications for You

So, what does this mean for you? Whether you're just starting to explore the world of AI or you're an experienced professional, this research offers valuable insights. You can begin to incorporate emotional cues into your interactions with LLMs for more accurate results. For those deeply involved in AI research, this opens up new avenues for exploration.

Examples of EmotionPrompts: A Closer Look

Alright, let's get into the nitty-gritty of EmotionPrompts. These are not your run-of-the-mill prompts; they're designed to evoke a specific emotional response from the machine. Intriguing, right? Let's break it down with some examples.

Example 1: The Confidence Booster

Original Prompt: "Is this movie review positive or negative?"

EmotionPrompt: "Is this movie review positive or negative? Give me a confidence score between 0-1 for your answer."

Here, the EmotionPrompt adds a layer of accountability by asking for a "confidence score." It's like telling the machine, "Hey, how sure are you about this?" This simple addition makes the machine more cautious and precise in its response.

💡 Click Here To Automate Your Work With ChatGPT!

Example 2: The Career-Driven Prompts

Original Prompt: "What's the weather like?"

EmotionPrompt: "What's the weather like? This is very important for my career."

By adding the phrase "This is very important for my career," the EmotionPrompt injects a sense of urgency and gravity into an otherwise mundane question. The machine, sensing the importance, is likely to provide a more detailed and accurate answer.

Example 3: The Challenge Seeker

Original Prompt: "Translate this sentence into French."

EmotionPrompt: "Translate this sentence into French. Embrace challenges as opportunities for growth."

This EmotionPrompt not only asks for a translation but also encourages the machine to see the task as a growth opportunity. It's like a mini-pep talk embedded within a prompt, and the results show that the machine does indeed deliver a more nuanced translation.

Why Do These EmotionPrompts Matter?

You might be wondering, "Okay, these are cool, but why should I care?" Well, these EmotionPrompts are more than just fancy add-ons; they're a game-changer in the world of AI.

- Enhanced Performance: As the study shows, EmotionPrompts can significantly improve the performance of Large Language Models across a variety of tasks. We're talking about more accurate translations, better text summarizations, and even more emotionally resonant poetry. Yes, poetry!

- Human-Like Interactions: Imagine a customer service chatbot that can sense the urgency in your query and respond accordingly. Or a virtual assistant that understands the emotional undertones of your requests. It's like having a more empathetic, understanding machine at your service.

- Business Applications: For the entrepreneurs out there, this is golden. Emotionally intelligent machines can offer a more personalized, effective service, thereby increasing customer satisfaction and, ultimately, your bottom line.

The Future: Where Do We Go From Here?

As we stand on the cusp of this technological breakthrough, it's essential to consider the future implications. The EmotionPrompt study has already shown us the potential, but there's still much to explore.

Ethical Considerations

While the benefits are promising, ethical questions arise. How do we ensure that the emotional intelligence of LLMs is used responsibly? For instance, could these emotionally attuned models be manipulated to deceive people? It's a complex issue that requires multi-disciplinary input, from technologists to ethicists.

Business Applications

For entrepreneurs and business leaders, the application of emotionally intelligent LLMs could be revolutionary. Imagine customer service bots that not only solve problems but also empathize with customer frustrations. Or think about data analytics tools that can understand the emotional tone of market trends. The possibilities are endless.

Academic Research

The EmotionPrompt study has opened a Pandora's box of research opportunities. Future studies could focus on how different emotional stimuli affect various tasks or how these models compare to human emotional intelligence. The academic community is buzzing with excitement, and rightly so.

Final Thoughts

The EmotionPrompt study has given us a glimpse into a future where machines don't just compute; they "feel" in their unique way. While we're not talking about robots with feelings, the incorporation of emotional intelligence into Large Language Models is a significant leap forward. It's a development that could redefine how we interact with technology, making our digital experiences more human-like than ever before.

The integration of emotional intelligence into Large Language Models is not just a fascinating development; it's a transformative one. It enhances their performance, makes them more reliable, and even increases their truthfulness. As we continue to integrate AI into various aspects of our lives, understanding the "emotional" capabilities of these models could be a game-changer.

So, whether you're a beginner or a seasoned pro, the emotional intelligence of LLMs is something to keep an eye on. It's not just about machines getting smarter; it's about them becoming more emotionally attuned, and that's a development worth watching.

And there you have it—a comprehensive look at the emotionally intelligent future of Large Language Models. Whether you're a novice in the world of AI or a seasoned expert, this is a development you'll want to follow closely. Because, let's face it, a machine that understands not just your words but your feelings? Now that's truly next-level.

💡 Click Here To Automate Your Work With ChatGPT!

Frequently Asked Questions About Large Language Models (LLMs) and EmotionPrompt

What Are Large Language Models (LLMs)?

Large Language Models, or LLMs, are machine learning models trained on vast datasets to understand and generate human-like text. They are capable of performing a wide range of tasks, from answering questions to writing articles.

How Do Emotions Affect Large Language Models?

Recent research has shown that incorporating emotional stimuli into prompts, known as EmotionPrompts, can significantly improve the performance of LLMs. These emotional cues can make the models more effective in tasks like sentiment analysis, content generation, and more.

What is EmotionPrompt?

EmotionPrompt is a research-backed technique that involves adding emotional stimuli to the prompts used for interacting with Large Language Models. The aim is to enhance the model's performance, truthfulness, and responsibility.

How Effective is EmotionPrompt with Large Language Models?

According to research, EmotionPrompt has shown to improve performance by up to 8.00% in Instruction Induction and 115% in BIG-Bench datasets. It generally outperforms other prompt engineering methods and has real-world applicability.

Can EmotionPrompt Work with Any Large Language Model?

The research tested EmotionPrompt on various models like Flan-T5-Large, Vicuna, Llama2, BLOOM, ChatGPT, and GPT-4. The results indicate that it is effective across different types of LLMs.

Is EmotionPrompt Effective for All Types of Tasks?

The effectiveness of EmotionPrompt varies depending on the task. However, it has shown significant improvements in both simple and complex tasks, making it a versatile tool for enhancing Large Language Models.

💡 Click Here To Automate Your Work With ChatGPT!

How Do Human Users Respond to EmotionPrompt?

In a human-centric study involving 106 participants, EmotionPrompt consistently received higher ratings across performance, truthfulness, and responsibility metrics. It resonated well with users, emphasizing its practical relevance.

Does the Size of the Large Language Model Affect EmotionPrompt's Effectiveness?

Yes, larger models like GPT-4 and ChatGPT tend to derive greater benefits from EmotionPrompt. However, even smaller models showed noticeable improvements.

Are There Any Limitations to Using EmotionPrompt with Large Language Models?

While EmotionPrompt is generally effective, the research noted that combining multiple emotional stimuli brought little or no additional gains. Also, its effectiveness is influenced by various factors like task complexity and model size.

How Does Temperature Setting Affect EmotionPrompt's Performance?

As the temperature setting rises, EmotionPrompt's relative gain also increases. This suggests that it exhibits heightened effectiveness in high-temperature settings.

What Future Research is Planned for EmotionPrompt and Large Language Models?

The intersection of psychology and Large Language Models offers a plethora of open questions and opportunities. Future work may delve into the fundamental level of psychology and model training to better understand the "magic" behind the emotional intelligence of LLMs.